I noticed others working with the ESP8266 processor, and wanted to share my device which is working but has a list of improvements that I’d like to make before calling it anywhere close to done.

I’m currently using the Adafruit unit with the Arduino IDE as they document. The board uses a couple of MCP3008 10bit ADC’s to do data acquisition. The ESP reads them over SPI at 2Mhz for a sample rate of 347 voltage/current reads per 60Hz cycle. The 80Mhz 32 bit processor is in a whole different league from the Arduino. Tons of floating point calculation won’t slow it down.

I transmit the accumulated power to the EMONCMS cloud at 10 second intervals using the onboard WiFi, which seems to work very well. There is software available to facilitate configuring the WiFi network, and it has the capability to work as a server as well to add a configuration and/or display app.

Right now, I’m trying to deal with some unique challenges that arise from the high sample rates. The plus side is that there seems to be no difference in results when sampling one cycle vs multiple cycles, so the whole 14 channels can be sampled and processed in about 350ms. The down side is that the phase shifting in both the 9V AC voltage sense transformer and the various CTs have become a significant factor.

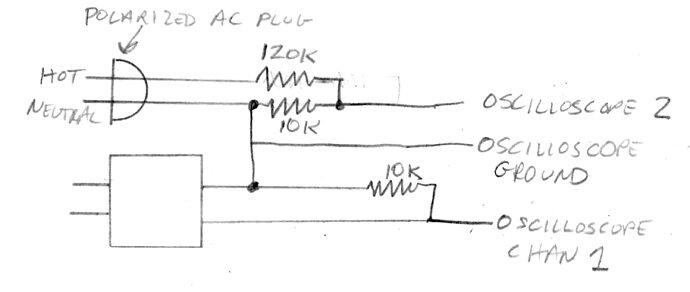

The samples are at just over 1deg while I’ve recorded what I think are phase shift errors of 10-19 degrees. I have read Robert Wall’s discussion of the SCT013-000 and the 4-8deg phase shifting there. I’ve also measured what I think is a 6deg phase shift in the AC voltage reference transformer. I looked at the phase difference between the voltage and current readings and found it to be about 14deg. That’s the number of samples between the sample pairs where each crosses zero when looking at a purely resistance load. Robert - any thoughts? It amounts to about a 2% reduction in real power. Obviously, no impact on RMS power.

Back to the ESP8266 in general - I’m going to try to add a decoupling transformer and power supply so that I can run the whole thing off the 9V AC reference. Right now I have 5V from an external supply. I’m using a 3.3V regulator for the ADC circuits (the ESB HUZZAH has it’s own) and it seems to be stable within the margin of error of the phase shifting and cheap CTs that I’m using.

I’ve been very pleased with the prototyping boards from ExpressPCB. I’m using their 2.5x3.8 standard size, and get three delivered, with solder mask and screen for about $70. Quality has been excellent. The application is a lot different from Eagle, and seems easier to manage for what I am doing.

I’ve been concentrating on the power meter application, and not looked at much of the other stuff people are doing, but obviously this processor has the capability to easily add temperature (one wire or 10K) and any number of input/output switches - and probably just about anything else. Right now, board real estate is the governing factor. I have designed it so that two can be coupled for a total of 28 power channels. The second board would not have an ESP8266 but attaches with a ribbon cable to the header.

That’s all for now. Looking forward some discussion and advice from people who might know more about the the phase shifting issues. Cheers.