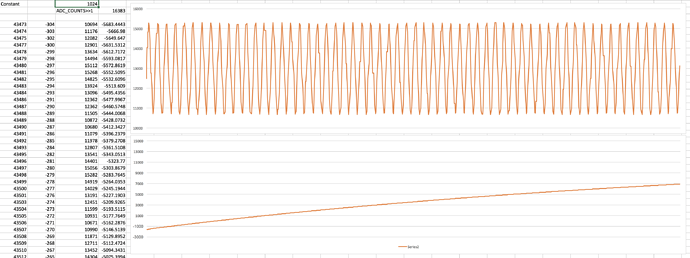

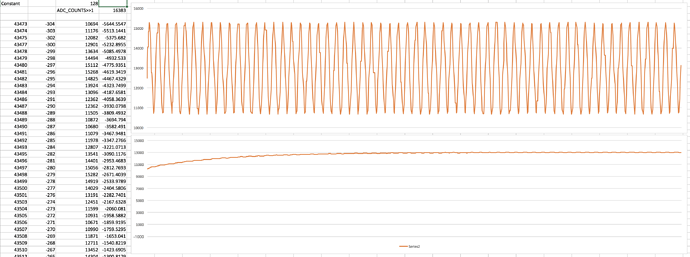

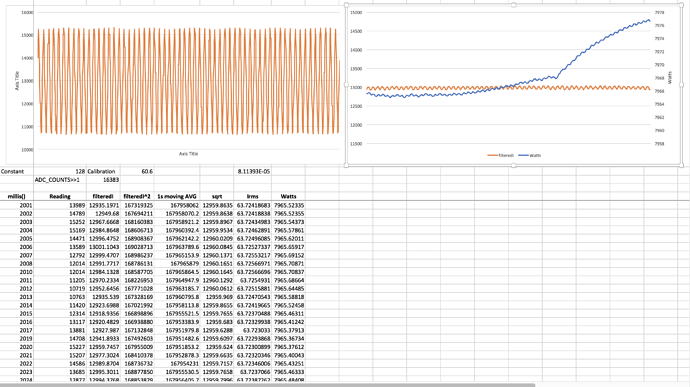

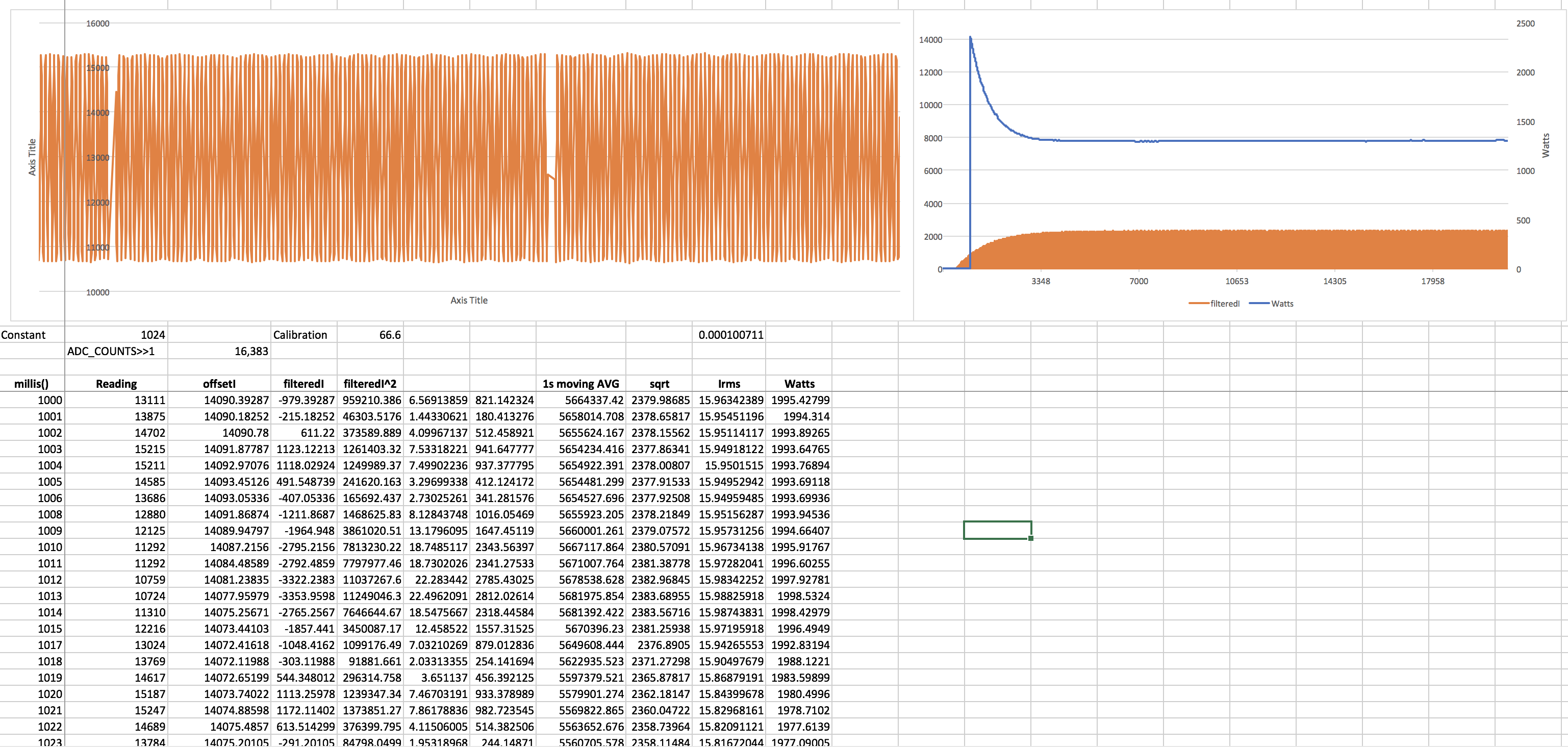

I’m experimenting with Wemos D1 Mini and using it to read from two CT sensors.

I just realized that by nature the sampling algorithm is kind of slow. I was able to change the ADS1015 rate to 3200/s and change its default read delay from 8 to 4ms, but the library still needs a fair amount of time to go through the 1480 samples.

The reason why it is a problem for me is that I also have a photo resistor on pin 0 of the ADS1015, that photo resistor is used to catch a laser light that is going through the hole in the meter disc. I’m basically counting rotations with it.

But for this to work, I need to be able to poll the photo resistor value much more often than what I can do now (I get a value every 2 or 3 seconds, way too much to “catch” the light going through.

Any idea to make things more parallel, would that be a problem if I probe the photo resistor level into the calcIrms() loop?

Thanks!