EDITED: as I dither between this working…or not! The 1st 2 comments below may no longer make sense

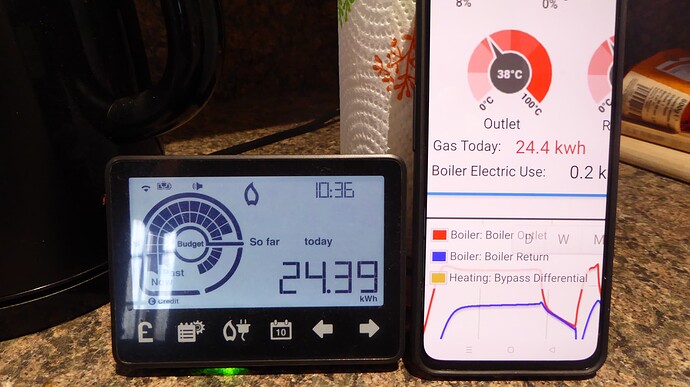

After Mike Henderson’s clever idea to calculate a proxy for Gas usage of a gas boiler - from a CT measuring electric current of the fan driving the combustion:

Could the Flame % be calculated knowing only the two temperature measurements:

- Boiler Flow, and Return

Short Answer

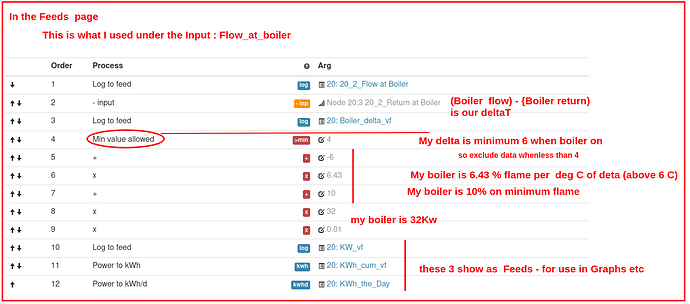

Yes. But need to be careful to exclude times when the boiler is OFF: by ignoring all samples where the main temp delta is lower than the minimum it can be when the boiler is on, in minimum flame.

Mike’s method uses electrical flow to know when the boiler is lit… but from pipe temps only, we don’t have that ‘signal’.

Details below.

Details

Like the CT method Mike describes - it also assumes that the boiler’s water pump is running at fixed speed.

Basically - the ‘work done’ as the Boiler modulates it’s flame up and down: can ONLY cause a change in delta between Flow out and Return In. Ain’t nowhere else for it to go.

So when I plotted the delta - it struck me that it is a proxy for Flame %.

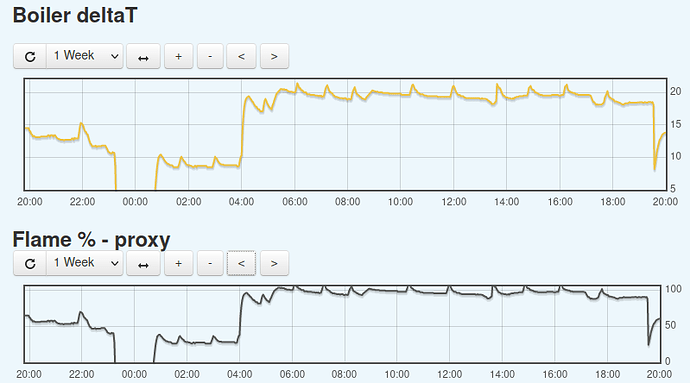

The graphs of Delta and Flame %

To work out the formula - I use Mike’s approach to watch the boiler’s app reporting Flame % and comparing it to my delta.

I got a 100% flame at delta of 20 C. and 10% (the boiler maker’s claimed minimum) down at 6 C.

So the maths for my specific boiler were

- 14 C range - for a 10-100% flame range: ie 6.43% per deg C

- so need to handle that (for the value of (deltaC less 6))

- then add the 10% of flame back

- then multiple by the boiler power (32Kw)

- add ‘log’ lines to save KWh and cumulative daily energy used .

Ie