Not sure how many people would be interested in Integrating AI into their energy monitor… but I started using this opensource AI --MyCroft… it works reasonable well…

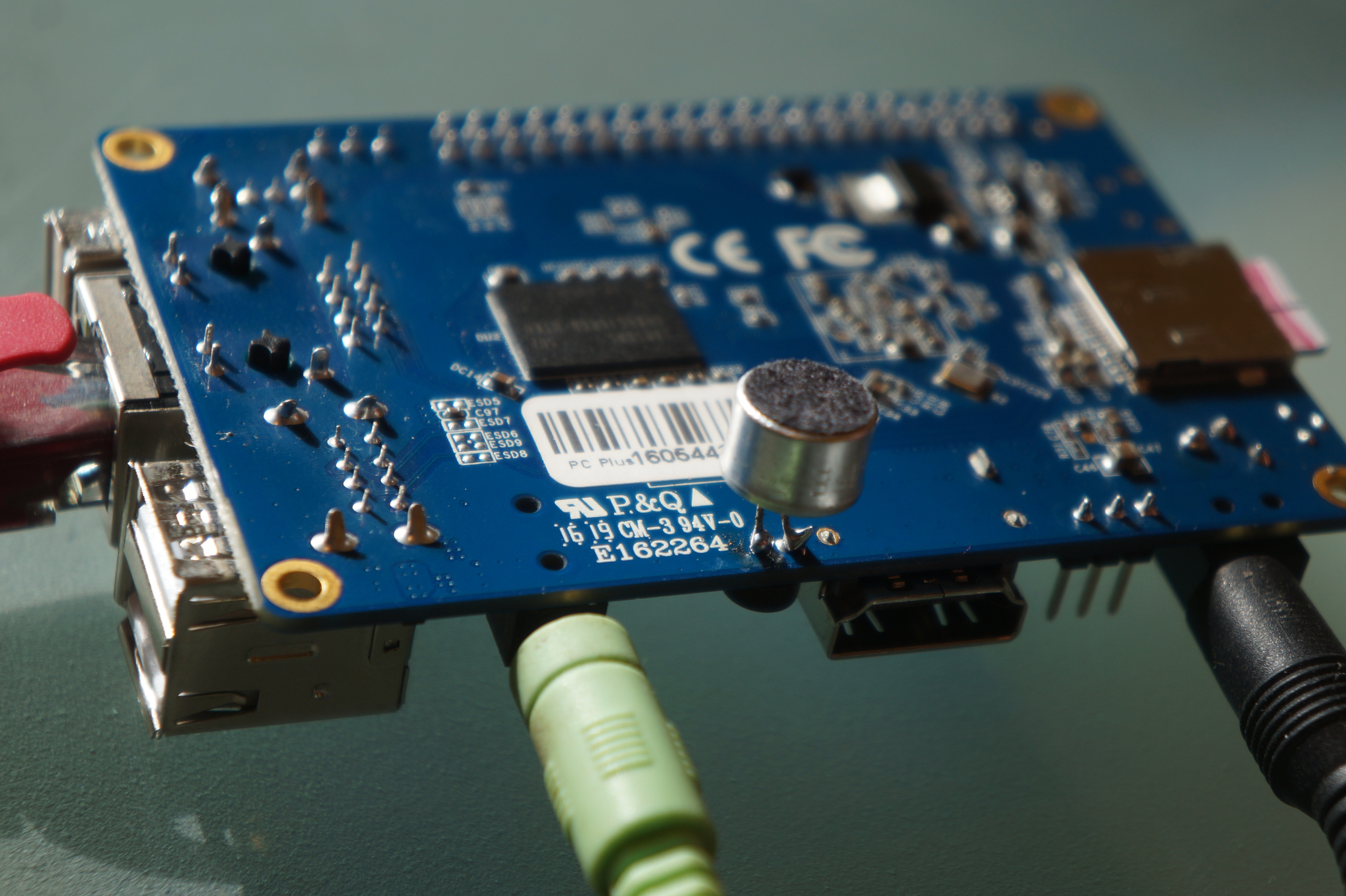

I installed in on quadcore orange pi running armbian… the hardest thing was getting the audio to work as you have to use Pulse Audio.

and there very little info on what software is required form a small base install so alot of guess work was involved

first off you need to enable your mic which is relatively simple - alsamixer basically tab over to capture on mic1 and hit the space bar to enable it …

next you need to to install mpv it just installs pretty much all the audio prerequisites files you can also in stall vlc ( i browsed through their code and seen they mentioning it for play back so I also installed that as well for good measure )…

apt-get install mpv

download a small test wav file to the device for testing

ie mvp test.wav

when playing the file it will tell you it is playing with alsa engine

installing pulse audio is well I am not sure if I installed it properly as their not a lot of consistent info on how to install that…

apt-get install pulseaudio pulseaudio-utils

next you need to give it permission to the user ie

usermod -a -G pulse root

usermod -a -G pulse-access root

logout or reboot

once done that you can run pulseaudio --system or pulseaudio -D

if everything is setup right now when you play a audio file with mpv it will say using pulse audio engine

now you can proceed with the mycroft install it will take a couple hours to install

cd ~/git clone https://github.com/MycroftAI/mycroft-core.gitcd mycroft-corebash dev_setup.sh

once done run

./start-mycroft.sh unittest

hopefully it will be error free it might display error as audio files did not finish downloading as that can take a while after it finished installing the core…

next test your audio with mycroft

./start-mycroft.sh audiotest

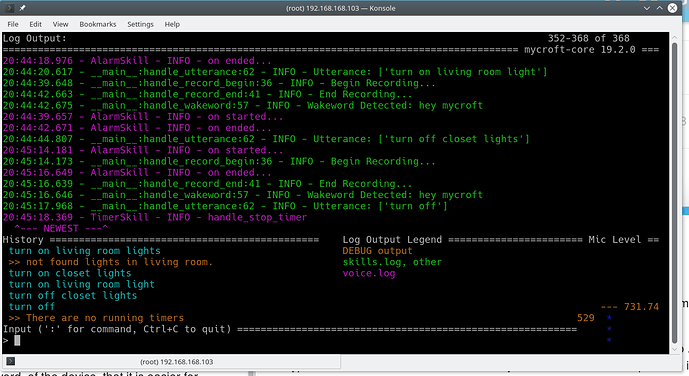

if all worked out well mycroft will now be able to hear you and speak to you.

next just run

./start-mycroft.sh all

to get it up and running and have a fully functional AI.

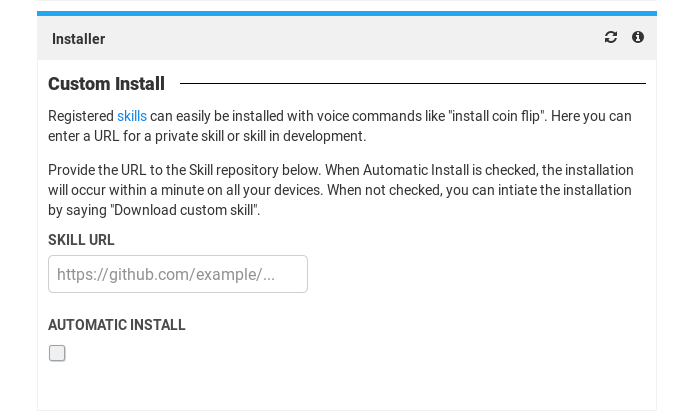

it will give you a pairing key and a way you go… pair it on their website

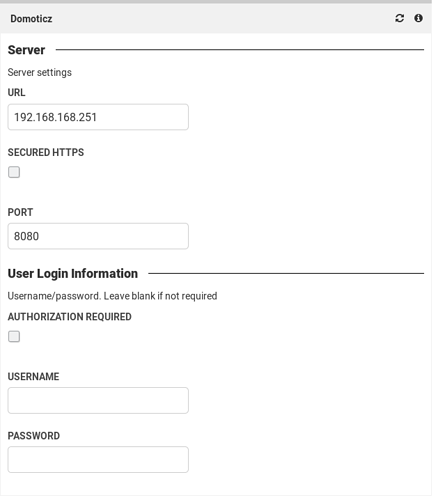

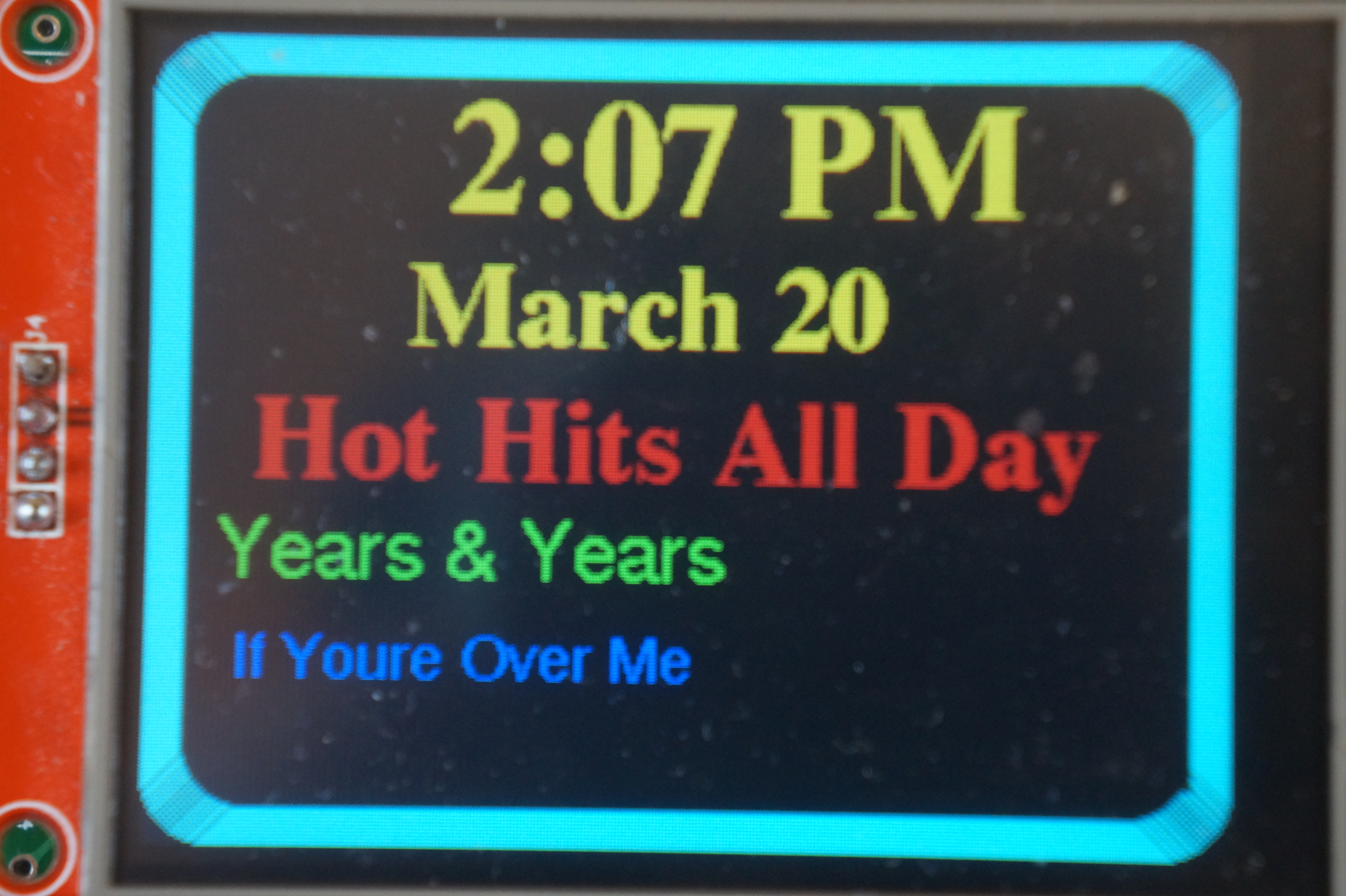

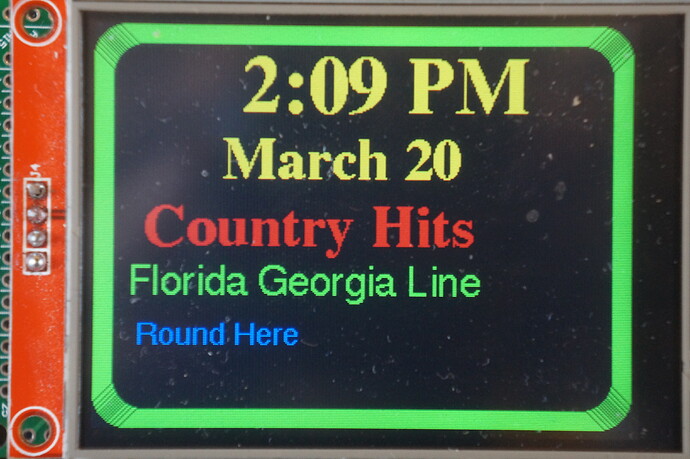

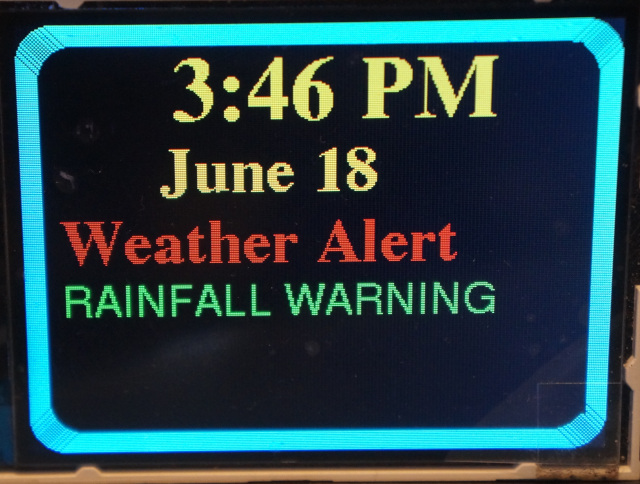

currently I got it running pretty well. got it stream music and alot of other function. currently trying to get domoticz to work. but it probably take me a couple days to understand how that code works… but if you are an openhab user it comes with a plugin that you can connect to it directly to control your devices. right off the top …

oh well good luck have fun