I wish to automate a daily emoncms backup to a remote computer. I’m able to do this using Node Red.

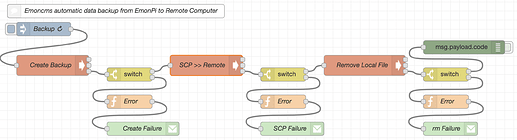

Node Red Flow:

[{"id":"c170246c.445538","type":"exec","z":"a5ce04b2.5a31f8","command":"sshpass -p \"password\" scp ~/data/emoncms-backup-* [email protected]:~/Documents/RPi/EmonPi/Backup/","addpay":false,"append":"","useSpawn":"false","timer":"","oldrc":false,"name":"SCP >> Remote","x":460,"y":200,"wires":[[],[],["8ad4e228.87d7e"]]}]

This flow runs ./emoncms-export.sh then scp’s the file to the remote computer and finally removes the original file from the local computer. It’s error checked at each stage. This works fine, but I wish to make the backup and store remotely without saving the file to the local computer SD card. i.e: zip and scp to remote directly, no file saved locally.

So I thought I could modify…

gzip -fv $backup_location/emoncms-backup-$date.tar 2>&1in emoncms-export.sh

by piping the scp command, something like this…

gzip -fv $backup_location/emoncms-backup-$date.tar 2>&1 | sshpass -p "password" scp ~/data/emoncms-backup-* [email protected]:~/Documents/RPi/EmonPi/Backup/

(I know I can create ssh keys to eliminate the password requirement)

I think I’m close, but not there, I know this piped command does not work as it creates two remote backup files filename.tar (7mb) and filename.tar.gz (1.7mb).

If I can get this to work I’ll then add it as a cron job.

Can anyone help me to define the correct syntax for piping gzip and scp? If this is possible.

Thanks in advance

Neil