Have you had a look at the PVO add status API?

I just found:

>>> print zip(names, rows[0])

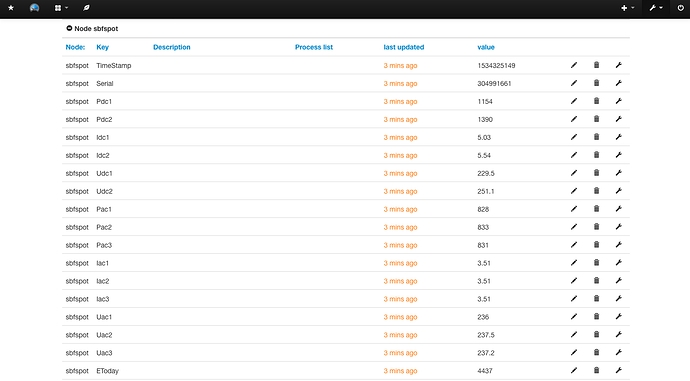

[('TimeStamp', 1534325149), ('Serial', 304991661), ('Pdc1', 1154), ('Pdc2', 1390), ('Idc1', 5.033), ('Idc2', 5.538), ('Udc1', 229.46), ('Udc2', 251.13), ('Pac1', 828), ('Pac2', 833), ('Pac3', 831), ('Iac1', 3.509), ('Iac2', 3.508), ('Iac3', 3.507), ('Uac1', 236.01), ('Uac2', 237.54), ('Uac3', 237.17), ('EToday', 4437), ('ETotal', 13844060), ('Frequency', 49.99), ('OperatingTime', 12077.6), ('FeedInTime', 11648.3), ('BT_Signal', 0.0), ('Status', u'OK'), ('GridRelay', u'Closed'), ('Temperature', 72.53)]

![]()

A library would be great, I’ll have a look. I now have a K V dict to work from at least.

Don’t know if it’s any help or not, but here’s a Python snippet that sends my deata to PVO.

(Not trying to get you to do it in Python, just an example of the string format PVO is expecting)

DATE = time.strftime('%Y%m%d')

TIME = time.strftime('%R')

Headers = {'X-Pvoutput-Apikey':'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx', 'X-Pvoutput-SystemId':'17152',}

Data = {'d':DATE, 't':TIME, 'v2':GENW, 'v4':CONS, 'v6':VOLT}

requests.post('http://pvoutput.org/service/r2/addstatus.jsp', headers=Headers, data=Data)I hadn’t, that’ll be useful later maybe.

I try do everything in python if I can get away with it ![]()

OK. So there are lots of options… I’m reading directly from the database on the Pi, however the API can be used to get/post the data from/to PVO.

I had a moment while reading the JSON doco of “what on earth does JSON even mean”.

I take it you weren’t up to speed on the term JavaScript Object Notation?

Same here.

The Python module Requests makes doing this kind of thing much easier.

True. https://www.json.org/

def OrderedDict_to_JSON(): #https://docs.python.org/2/library/collections.html#collections.OrderedDict

global smaJSONstring

names_firstrow_ordered = OrderedDict(zip(names, rows[0]))

smaJSONstring = json.dumps(OrderedDict(names_firstrow_ordered))

print (smaJSONstring)

Produces:

{"TimeStamp": 1534325149, "Serial": 304991661, "Pdc1": 1154, "Pdc2": 1390, "Idc1": 5.033, "Idc2": 5.538, "Udc1": 229.46, "Udc2": 251.13, "Pac1": 828, "Pac2": 833, "Pac3": 831, "Iac1": 3.509, "Iac2": 3.508, "Iac3": 3.507, "Uac1": 236.01, "Uac2": 237.54, "Uac3": 237.17, "EToday": 4437, "ETotal": 13844060, "Frequency": 49.99, "OperatingTime": 12077.6, "FeedInTime": 11648.3, "BT_Signal": 0.0, "Status": "OK", "GridRelay": "Closed", "Temperature": 72.53}

In the JSON string, there are values containing alpha-numeric strings for “Status” and “GridRelay”.

How does emonCMS handle this? Shall I rid these Objects?

Recap/sanity check… My plan is to create a script which runs alongside SBFspot.

If I get that far it will:

- poll the SBFspot database every few seconds to find an updated record in table ‘SpotData’ at index[0].

- send the data to emonCMS locally or remotely, at the moment the method is to create a JSON string of that most recent record at index[0], somehow include the relevant API key, and post to emonCMS.

I think the main advantages of the method is that is caters for those who don’t have emonCMS running on their Pi, and those who do. Also, for local installs, having the script running locally means data is collected into emonCMS regardless of internet connectivity. Plus, as I see it, SBFspot is well-maintained for ethernet and bluetooth.

emoncms.org to have a wrapper to grab data from PVO is definitely also a good idea

EDIT: I’m still unsure if that’s the proper use of the term ‘wrapper’.

EDIT EDIT: and having that wrapper on emonCMS locally also I see the benefit of, although I imagine it’ll be used less often.

Not quite, my suggestion of the “wrapper” AROUND emoncms was so that emoncms emulates PVOutput in that if you point a program written to talk to PVOutput to the wrapper on emonCMS it wouldn’t know the difference.

Don’t let this stop you in your current task though, sounds like you are on a route to success.

Couple of points to watch out for:

- MySQL (or MariaDB) will probably write to the SD card a lot so wear may be a problem and your Rasp PI dies

- MySQL will eventually fill up the SD card, so think about truncating the data to keep a rolling week or so of data if you don’t intend to do anything with it except push into emoncms.

- You may be able to configure MySQL (see point 1) to use in-memory tables to save on disk space but this may be a limit on the raspberry pi

Progress

def url_stringing():

global URLstring

emoncmsaddress = "emoncms.org"

node_id = "sbfspot"

URLstring = "https://" + emoncmsaddress + "/input/post?node=" + node_id + "&fulljson=" + str(smaJSONstring) + "&apikey=" + apikey

print URLstring

def post_to_emonCMS():

r = requests.get(URLstring)

print (r)

The problem I mentioned earlier of SBFspot not running after restart, was that the UploadDaemon was unable to write to a directory (I was unable to change the directory permissions).

The solution was to move the smadata folder to /home/pi/data/, which has permissions 777 set by default, and to change the SBF conf and UploadDaemon conf files to point to the new location.

New thread is here for redis CPU load:

I’m trying to type a # in dataplicity on mac and it’s driving me nuts.

I have my python script initiated by a .sh which is initiated by cron upon initiation  the script’s on the same interval as SBFspotUploadDaemon.

the script’s on the same interval as SBFspotUploadDaemon.

Why not start the Python script directly from your crontab?

That’d be simpler.

I’m trying also to make it start a few seconds after the upload daemon…

Would a sleep commmand near the start of your Python script do the trick?

It would.

I’ve realised though that a sleep command is in fact unnecessary.

The core SBFspot program is inputting to the database, it’s independent of the UploadDaemon, my script happening a few ms after the UploadDaemon will be grabbing the same data from the database. All good.